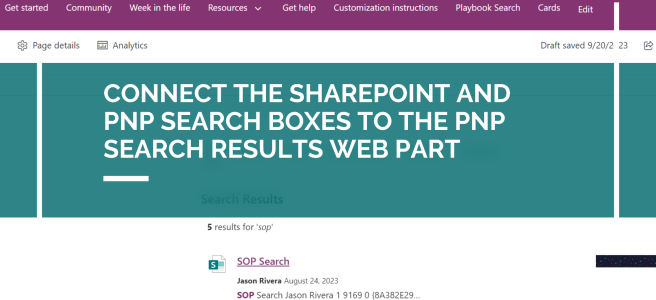

I recently worked on a PnP modern search solution and I was asked to make it so that the SharePoint search box would redirect to our new search page and that the search page also contain the PnP Search Box web part. Both search boxes also needed to connect to the PnP Search Results web part.

Connect the SharePoint and PnP Search Boxes to the PnP Search Results Web Part